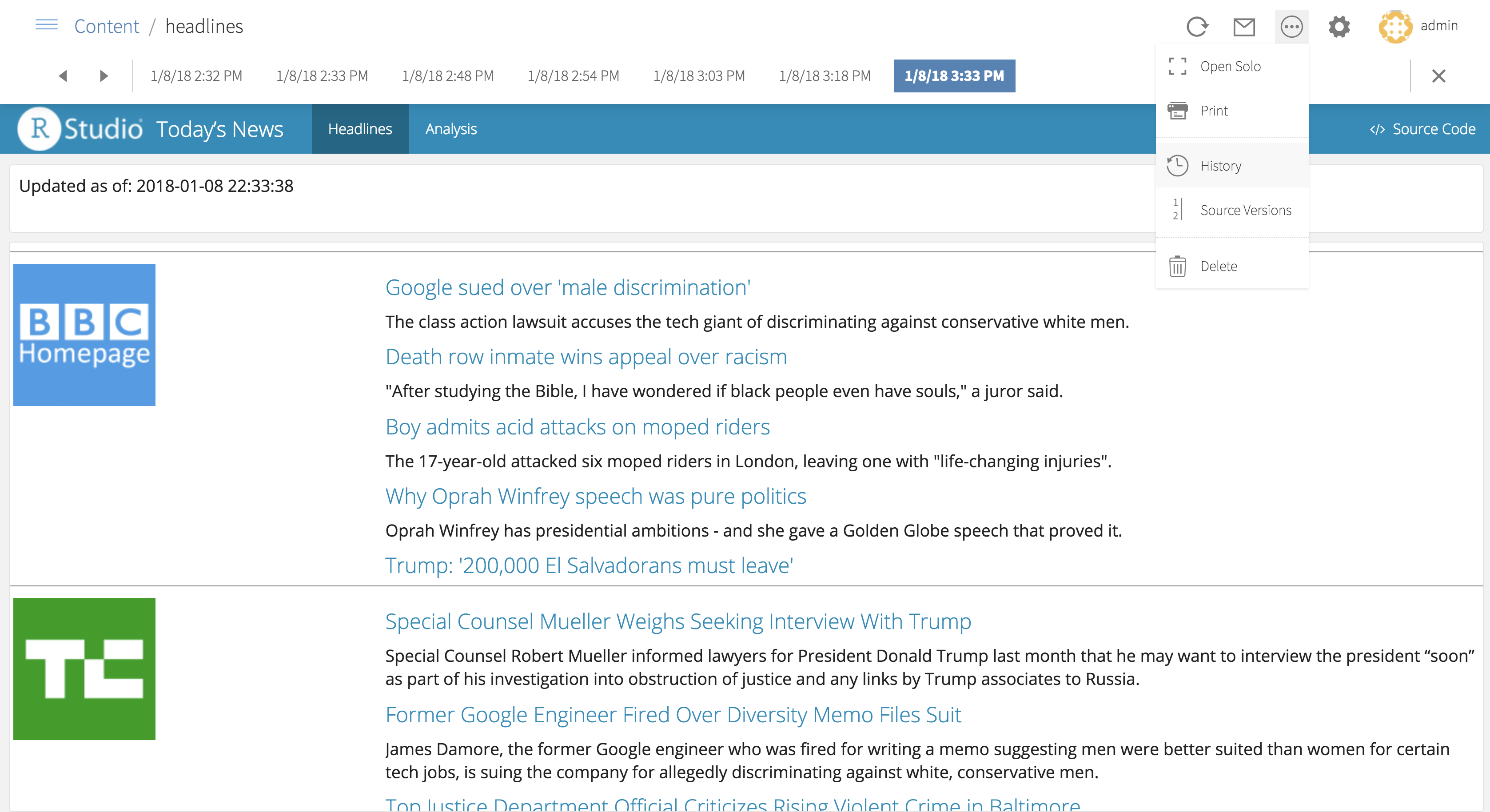

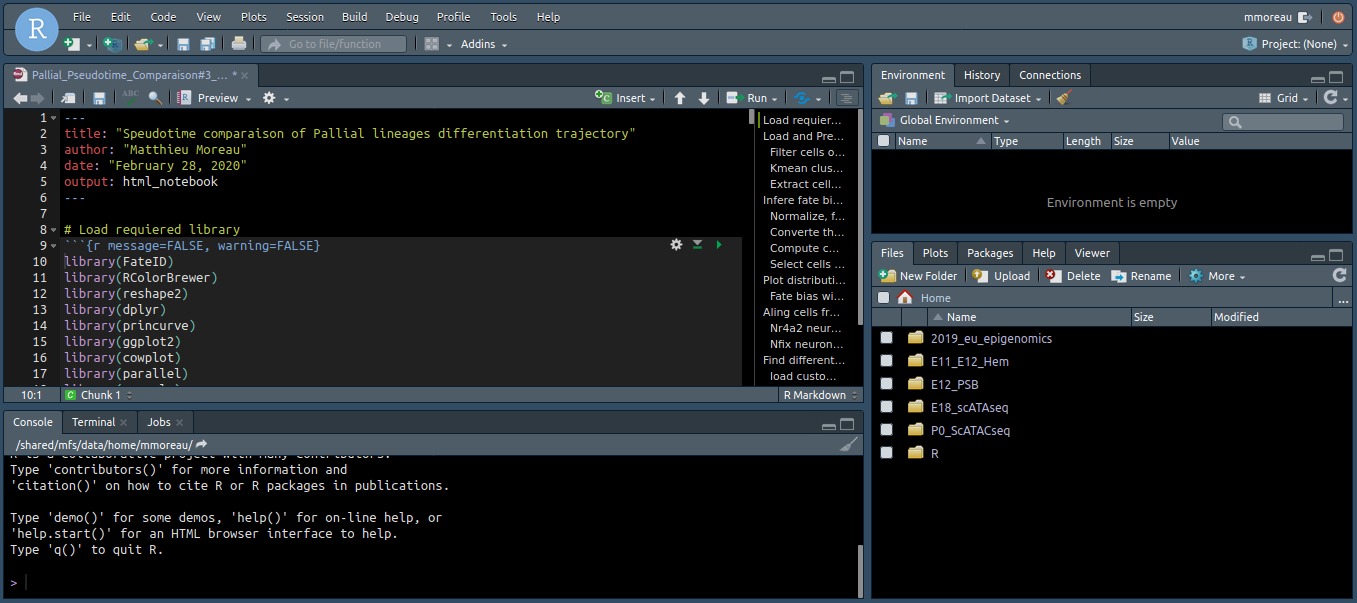

Oil: Resource Balancing in the Arctic National Wildlife RefugeĪnd here is my current r code and what it generates: BernhardtĬoastal Marine Debris in Alaska: Problems with Plastics, Pollution, & Policy The Kenai Rule in Four Acts: Bear Baiting, Firearms, and Hunting: Comment & Analysis of Alaska v. Protecting Subsistence Lands While Boosting the Bottom Line: The Enhanced Federal Tax Incentive Available to Alaska Native Corporations for Donations of Conservation Easements Heat Waves and a Public-Private Partnership in Alaska Keynote Address: Alaska Native Peoples and the Environment Kenai Peninsula Borough and the Church of the Flying Spaghetti Monster Renewed Debate over Alaska's Establishment Clause: Hunt v. The Plaintiff's Plight: Altering Alaska's Rule 82 to Better Compensate Plaintiffs It Takes a Village: Repurposing Takings Doctrine to Address Melting Permafrost in Alaska Native Towns Strangers in Their Own Land: A Survey of the Status of the Alaska Native People from the Russian Occupation Through the Turn of the Twentieth CenturyĪlaska's Lengthy Sentences Are Not the Answer to Sex Offenses Here is the end product I want (using Volume 39, Issues 1 & 2). Alaska USA Federal Credit Union: The Supreme Court Charts an Uncertain CourseW. Petersburg Public School and Fridriksson v. I tried using the Web Scraper Extension, but I couldn't figure out how to generate the tag I wanted (it seemed to just want to scrape directly for me).Ĭonsciousness of Wrongdoing: Mens Rea in AlaskaBarry Jeffrey Stern1Įmployment Discrimination Law-Strand v. Maybe the correct tag could be found in the code or I'm just using selector gadget improperly.

Here is the HTML code from the webpage for the Volume 39, Issue 1 Articles section. Here I tried a slightly different click on the PDF icon So I used Selector Gadget to only select the PDF URLs for the two Articles, but it gave me errors. The_website % html_nodes(PDF_link_tag) %>% html_children() %>% html_attr("href") My first attempt below worked, but gave me the PDFs for all eight documents on the page: However, this was a bit of problem when getting the URLs for the PDFs. (2) To solve the problem in #1 above, I thought about doing separate scrapes for each type of piece and then combining the dataframes. I could do this manually by repeating Articles multiple times, but this is not consistent between each journal issue so I was hoping to be able to connect this to the actual heading on the webpage. I was able to list each of the headers but couldn't get them to match up with the items underneath the header (i.e., to assign a "Type" value of "Articles" to each piece under the Articles heading). (1) I want to label each piece based on type (e.g., Articles or Notes) but the type is only listed once as the header. I tried to include all the relevant information below but let me know if you have any questions. However, I have come across a couple problems. I thought it would be fun to try creating this via data scraping rather than manual input. I am trying to create a catalogue of all the pieces written for a law school journal, including many identifiers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed